Last week I attended the Virtual Reality LA tradeshow. VR has come a long way even in the last year. The Oculus Rift and Vive are both shipping (and under $600) and the next generation of 4k and 6k viewers look even better. I went to the conference to learn a bit about audio and music for VR.

Currently there are four leading audio systems for VR: Facebook 360, G’Audio, Dysonics, and Dolby Atmos. There are other players such as DTS:X, but apparently they are far behind. I believe Unity and Unreal Engine also have their own 3D sound engines. Most of these tools are free to download and play with, but most require Pro Tools HD 12 for Mac. I downloaded Facebook 360 and G-Audio and here’s what I found.

Facebook 360

It is very strange having a Facebook plug-in on my DAW. I first tried this (2.0 beta 2) in Cubase 9, and the plug-ins loaded but didn’t work at all. Nothing I did had any effect. So I tried them in Pro Tools HD and they were much happier. Pro Tools even has a preset template for this plug-in, go to the Post Production templates and there’s a FB 360 preset.

It is very strange having a Facebook plug-in on my DAW. I first tried this (2.0 beta 2) in Cubase 9, and the plug-ins loaded but didn’t work at all. Nothing I did had any effect. So I tried them in Pro Tools HD and they were much happier. Pro Tools even has a preset template for this plug-in, go to the Post Production templates and there’s a FB 360 preset.

Before I talk much more about VR audio, I should mention that there is actually a standard for all of these, called Ambisonics or B-format. This initially started with the Soundfield microphone, a cluster of four mic capsules that form a pair of figure-8 patterns. These are decoded into a 360 degree sound field. Most mixers consider ambisonics audio to be pretty basic, but it does work on a majority of players like YouTube.

Facebook 360 Spatial Workstation outputs its own audio format, and obviously it works for Facebook 360 videos. (Although you can also output ambisonic from any of these tools.) There are four plug-ins in the suite. Each track you are moving gets a Spatializer plug-in, and these feed a master track with FB360 Control plug-in. The spatializer allows you to select an audio channel format (mono, stereo, 5.0, etc.) then move each of those channels around in a 3D space. You can fly things around your head and move them up and down. There is a video window in the plug-in that allows you to follow an element in video. For example, if a bug flies from left to right you could trace that movement with the cursor to have the audio follow.

FB360 sounded OK on simple subject matter, like a solo conga playing around. I tried putting one of my 5.0 music mixes through it and didn’t love the results. It sounded phasey and hollow (although maybe I’m doing it wrong) and the surround was not impressive enough to be worth it. Most of the successful VR music demos I heard at the show were four instruments in sparse arrangement, so that’s probably the best bet for VR scoring. You get the option for music to ignore head tracking (known as “head-locked”) or to bypass the plug-in completely, which might work better for dense orchestral scoring in a game.

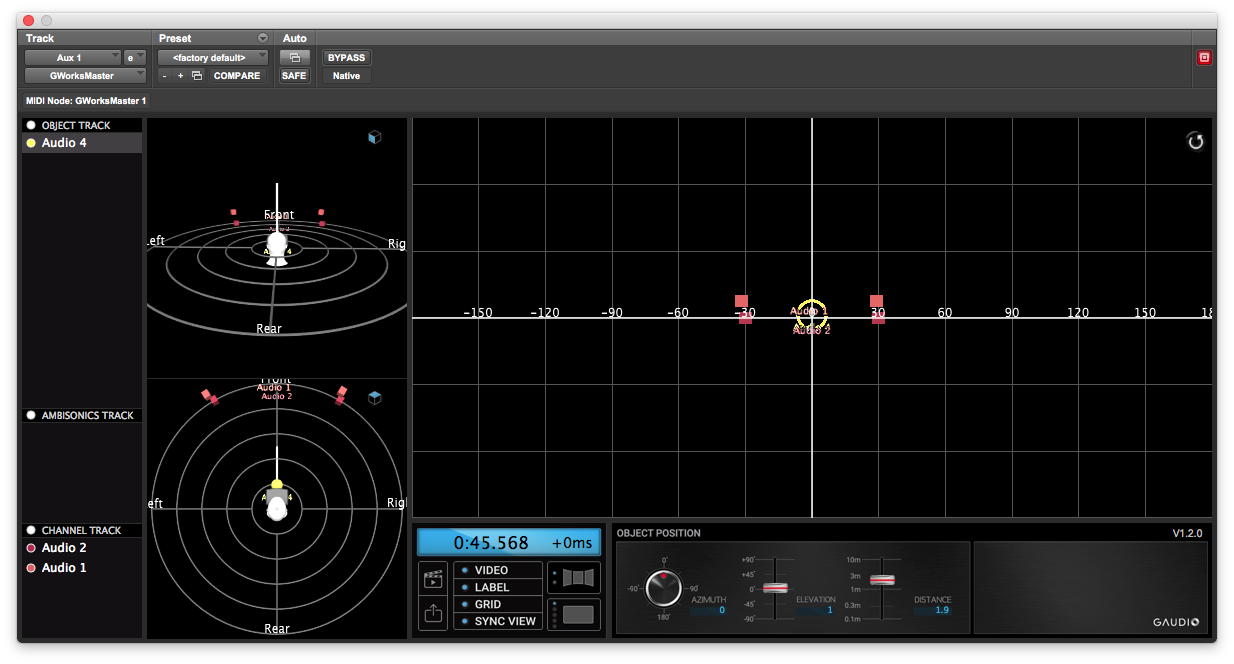

G’Audio Works

I liked the sound of G’Audio more than Facebook 360. It’s the first binaural panner that seems to get the rear channel correct. Often with 3D panners you can’t tell if the sound is supposed to be directly behind you or to one or the other side, others have little difference between directly in front or behind. But G’Audio got it right. They are licensing their engine to games, which is how they hope to make their money. So they have “interactive” plug-ins for Unreal, Wwise, and Unity engines that track game elements, as well as this suite for “Cinematic” 360 videos.

I liked the sound of G’Audio more than Facebook 360. It’s the first binaural panner that seems to get the rear channel correct. Often with 3D panners you can’t tell if the sound is supposed to be directly behind you or to one or the other side, others have little difference between directly in front or behind. But G’Audio got it right. They are licensing their engine to games, which is how they hope to make their money. So they have “interactive” plug-ins for Unreal, Wwise, and Unity engines that track game elements, as well as this suite for “Cinematic” 360 videos.

Unfortunately, their plug-ins (version 1.2.0) feel very much in beta. They only work in Pro Tools HD for Mac. I couldn’t get it to insert on a 5.0 track, so I couldn’t test surround music playback. Worst of all, you need to insert the “slave” plug-ins on all of your audio tracks before you insert the “master” on your main aux buss. This is pretty crummy because all of the motion editing is done on the master plug-in, not the slaves like FB360. So if you need to add another audio track, you need to delete the master plug-in and lose all of your work. But the sound is good enough that I would still rather use this plug-in than Facebook.

Like Facebook you get a video viewer so that you can track elements. There are two views, one that shows the entire soundfield and another showing your point of view when looking through a VR viewer.

Dysonics

I first saw Dysonics with their unique surround microphone. Imagine a binaural head mic, but instead of two elements on the sides there are eight elements all the way around. You can decode this to get 3D audio that sounds very good. They’re working on a smaller version – the current mic is head-sized and only available for rent.

I first saw Dysonics with their unique surround microphone. Imagine a binaural head mic, but instead of two elements on the sides there are eight elements all the way around. You can decode this to get 3D audio that sounds very good. They’re working on a smaller version – the current mic is head-sized and only available for rent.

They also have their own 3D engine – Dysonics Rondo 360. They are funded by Intel and seem like a great team. There’s a free trial but after that the software costs $40/month, billed yearly. The good news is that the software sounds very nice, with rear audio as good as G’Audio. A 5.0 surround mix still sounded as thin and weird as Facebook, maybe I just need to remix when using this format.

The Dysonics currently works in every DAW except Pro Tools HD, which seems a strange omission as this is 99.999% of post mixing. There is a send and return plug-in, and these send to a separate application that runs outside your DAW. It has a video player and many of the same positioning controls as the others. But the downside of a separate app is that the two files need to be synced up, it isn’t saved in your DAW mix like the others. You also need to name your track inside of the plug-in because it doesn’t get the track name in VST.

Dolby Atmos

This plug-in suite is only available to beta testers, and the beta group is closed. The advantage to Atmos is that I assume there will be a way to output both discrete outputs for speaker playback as well as binaural for VR – with these other tools you need to mix everything twice. I heard a demo of a film score being recorded at Air Studio Lyndhurst in London, with full orchestra, percussion, and choir. It sounded great, none of the phasing I heard in Facebook and great front to back directionality. I doubt this one will be free, though. That doesn’t sound like Dolby.

Another advantage to Dolby is that they reportedly work on high track counts. A post production mix for a film or game can easily number 400+ tracks, and I can’t imagine that working in most of these interfaces.

This is a fast-moving industry. Many of the new VR viewers were based on brand-new technology not available at CES only three months ago. It’s something I need to pay attention to. I already know a few people in this field, and it would be nice to learn it while everyone is still figuring it out.

Carlos Santa Rita

November 25, 2018 at 6:52 am

Sou Carlos Santa Rita,

De uns 10 anos para cá ainda sou o único produtor brasileiro de efeitos sonoros a conseguir entrar no mercado americano e internacional, produzo para Sound Ideas, Soundsnap e AudioMicro. Estou começando a produzir áudio Ambionico, e acho que é um novo caminho com variadas possibilidades criativas. Qual seria o plugin indicado para que eu converta minha biblioteca com quase 6.000 arquivos que já produzi em HD 24 bits 96 Hz stereo para Ambionico?

jlaity

January 29, 2019 at 10:02 pm

I’m sorry, I am not an expert in Ambisonics. I hope you can find an answer somewhere.

Me desculpe, eu não sou um especialista em Ambisonics. Espero que você possa encontrar uma resposta em algum lugar.